AI Knowledge Assistant

The best use of an expert's time isn't answering basic questions.

Role: UX & Product Design

Timeline: 4 weeks

Team: Product, Engineering, Support

Overview

Client’s support team had a triage problem. Expert engineers were fielding basic queries that could have been resolved by the user provided they had the means, and in some cases knowledge of how to ask, for what they needed.

I designed an AI-powered knowledge assistant that coached users toward better context upfront, reducing the volume of support requests and getting everyone faster answers.

Problem

Support channels were overwhelmed, but not because users had genuinely complex problems. Most tickets were repeats. The real issue was that users submitted vague, incomplete requests because they didn't know what context was required. Lack of context slowed everything on both ends: users waited longer, and support agents spent time asking clarifying questions before they could even begin to help.

The deeper challenge was designing for a wide range of experience levels. A seasoned engineer and a first-time user approach the same tool completely differently and the support experience wasn't accounting for that gap at all.

Goal

Shift the support model from reactive to self-service without making the experience feel like an extra task.

Research & Process

Discovery

Without direct access to end users, I worked closely with the client's subject matter experts interviewing 12 of 20 contacted SMEs to understand where the support system was breaking down. I also analyzed support ticket patterns to identify recurring query types and map where resolution was slowest.

Synthesis

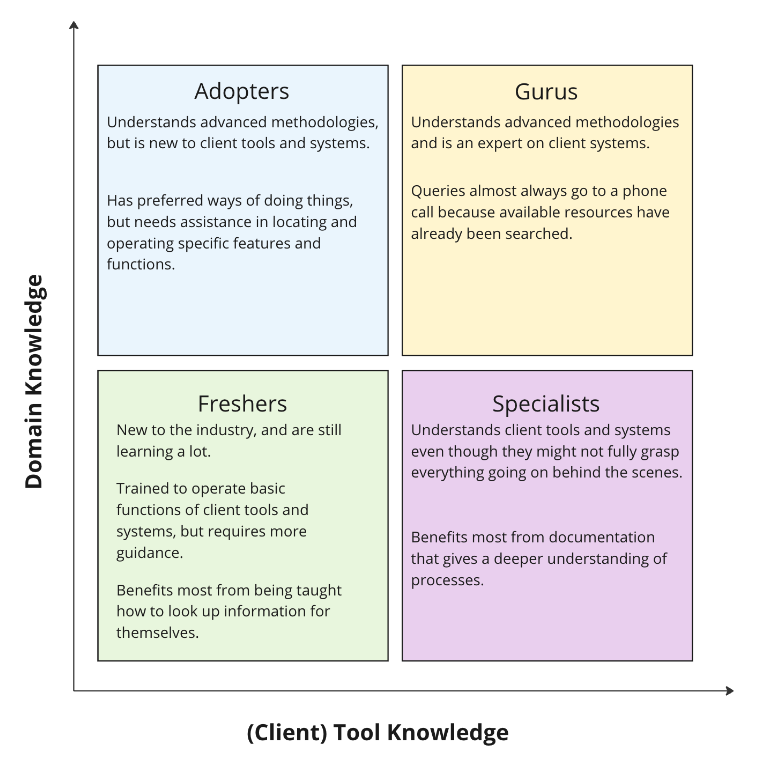

The research revealed four distinct user groups, each with different support needs based on their experience level and domain knowledge. More experienced users provided better context and got faster results. The system was inadvertently rewarding expertise rather than enabling everyone equally.

Ideation

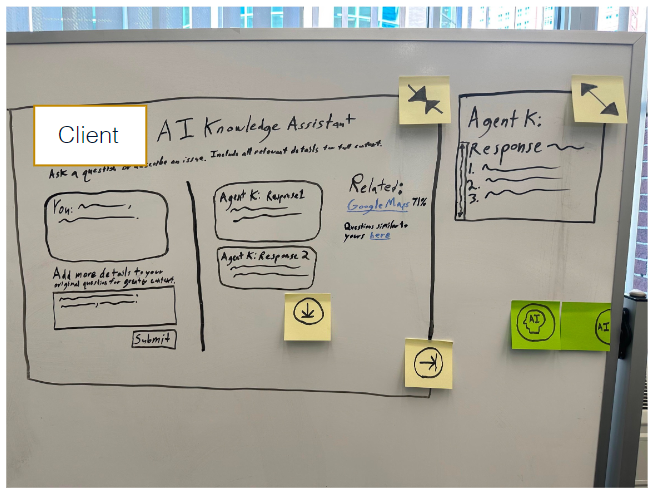

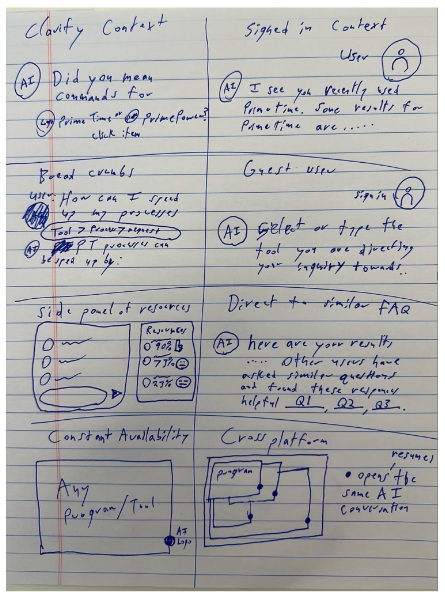

Early ideation explored two directions clarifying context before submission versus signing users into context automatically. We tested both approaches against real ticket language from the SME interviews.

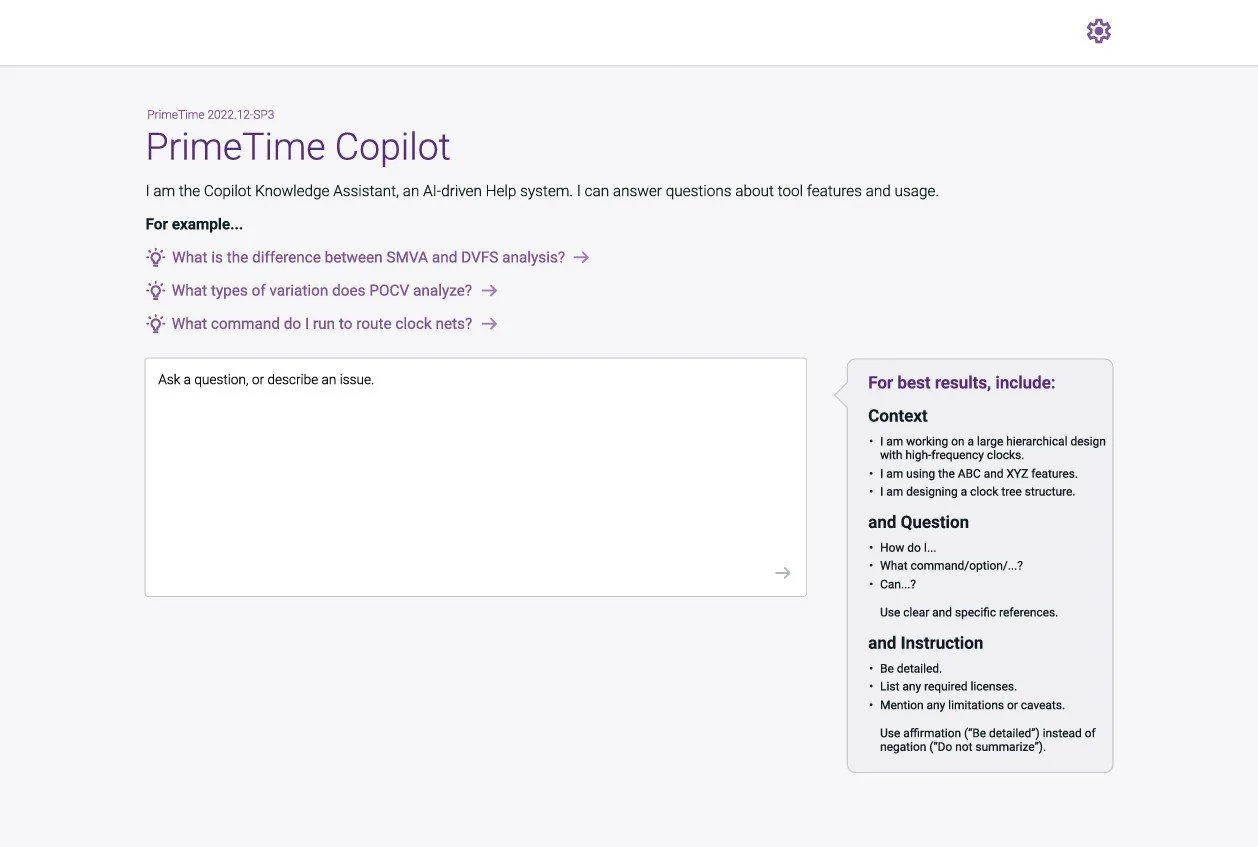

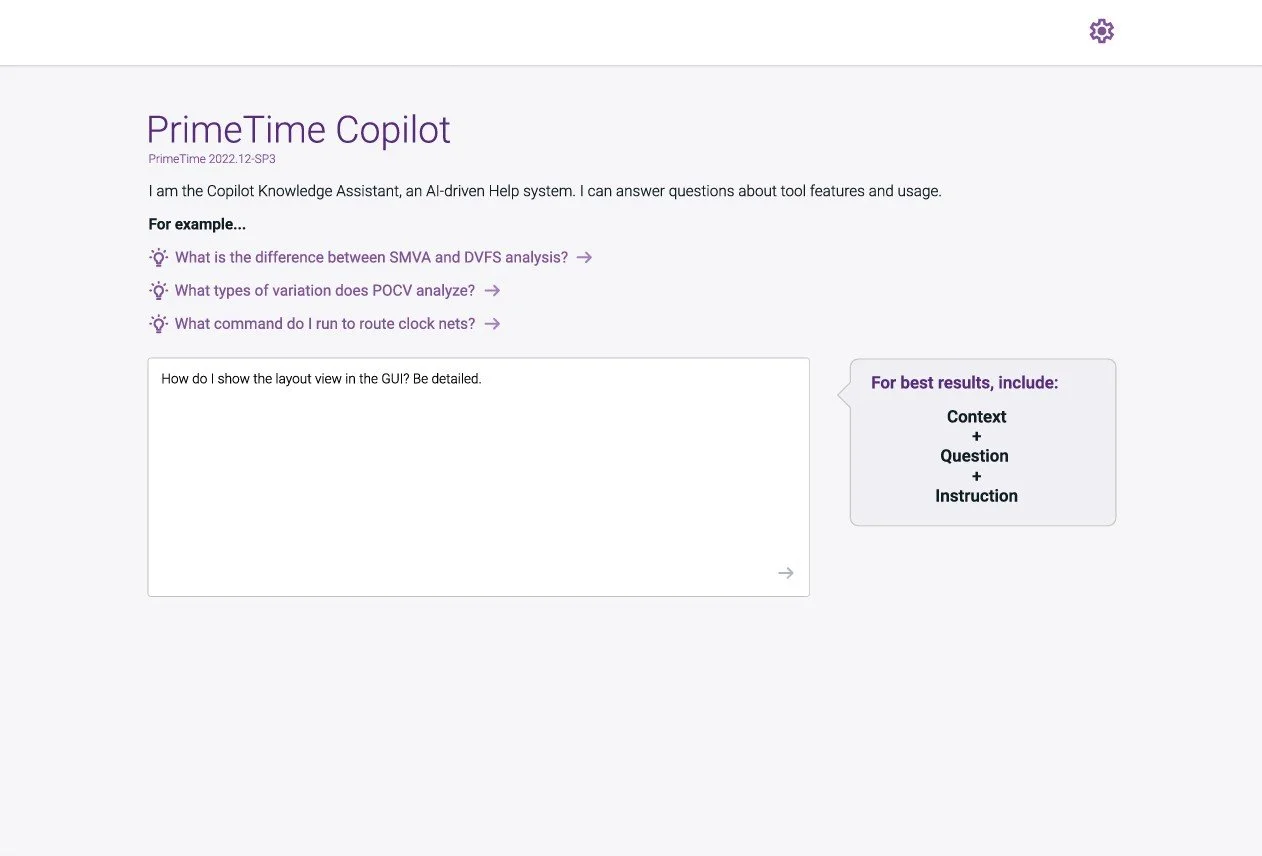

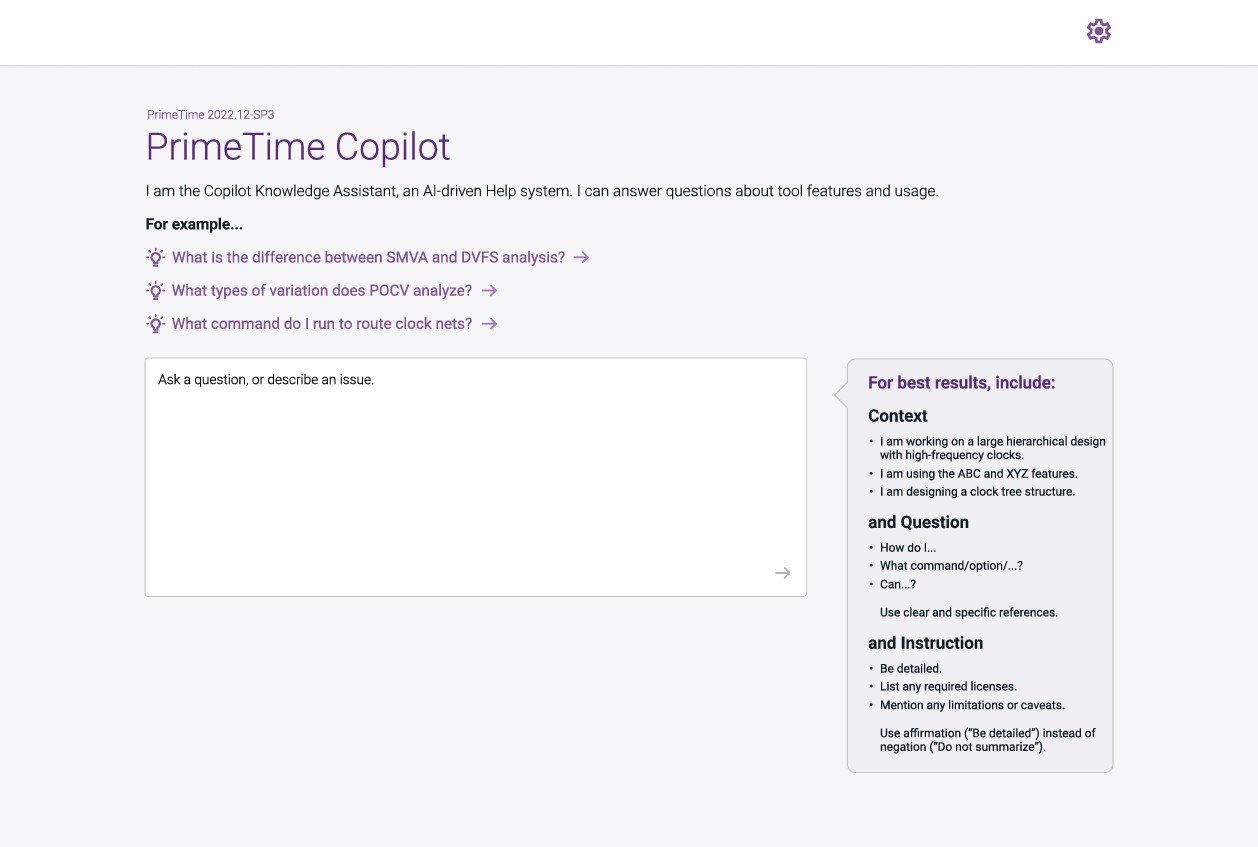

Rather than redesign the entire interface, I focused on one high-leverage intervention: a contextual guidance panel that coached users toward better inputs without interrupting their flow. The goal was to make expert behavior the default, not the exception. The design moved from 'ask anything' to 'ask better.'

Design Strategy

Key Insight: Users weren't failing because they lacked knowledge they were failing because they lacked guidance. That distinction changed everything about how I approached the design.

Solution

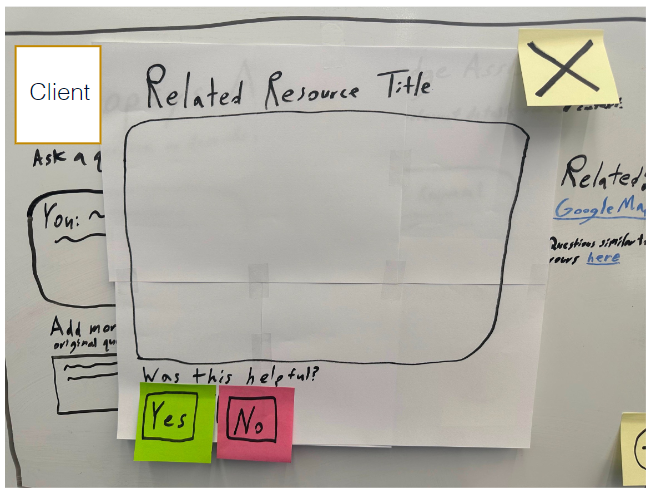

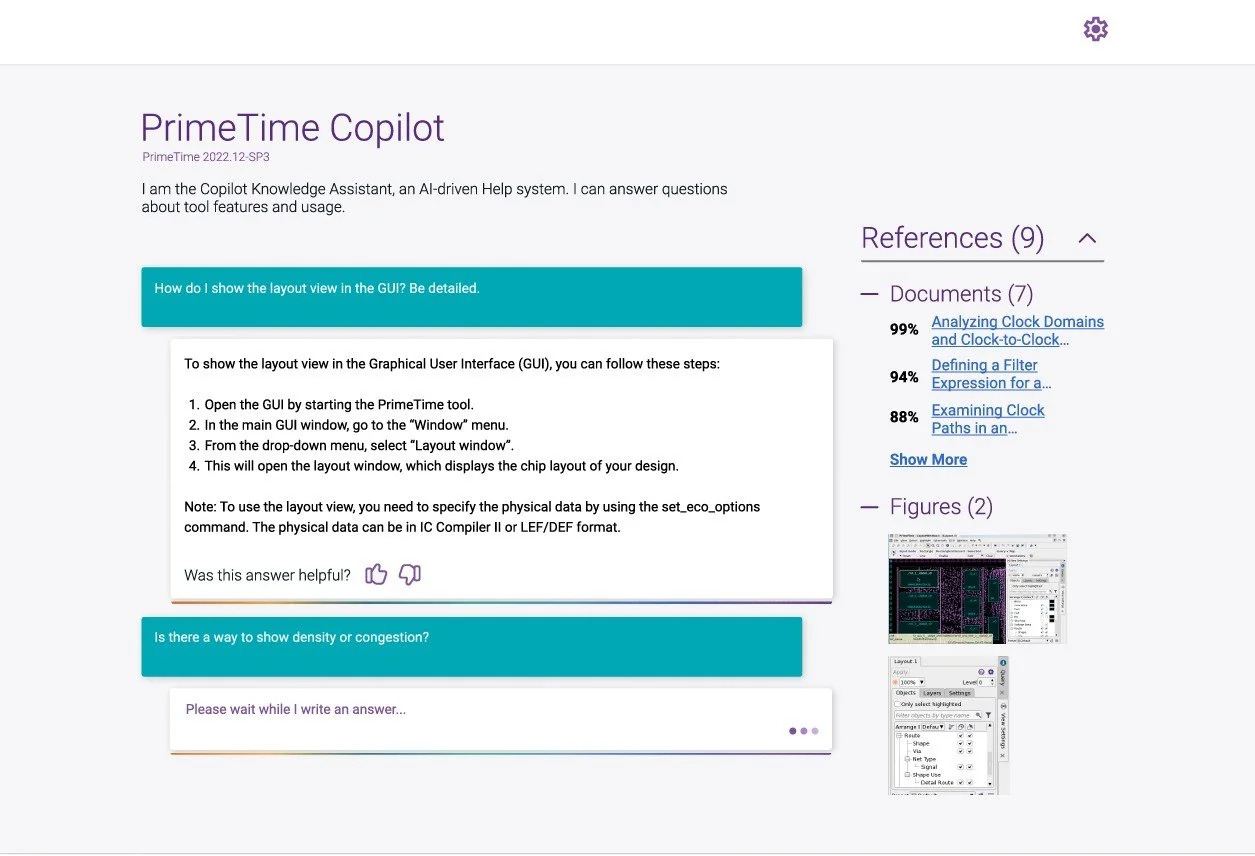

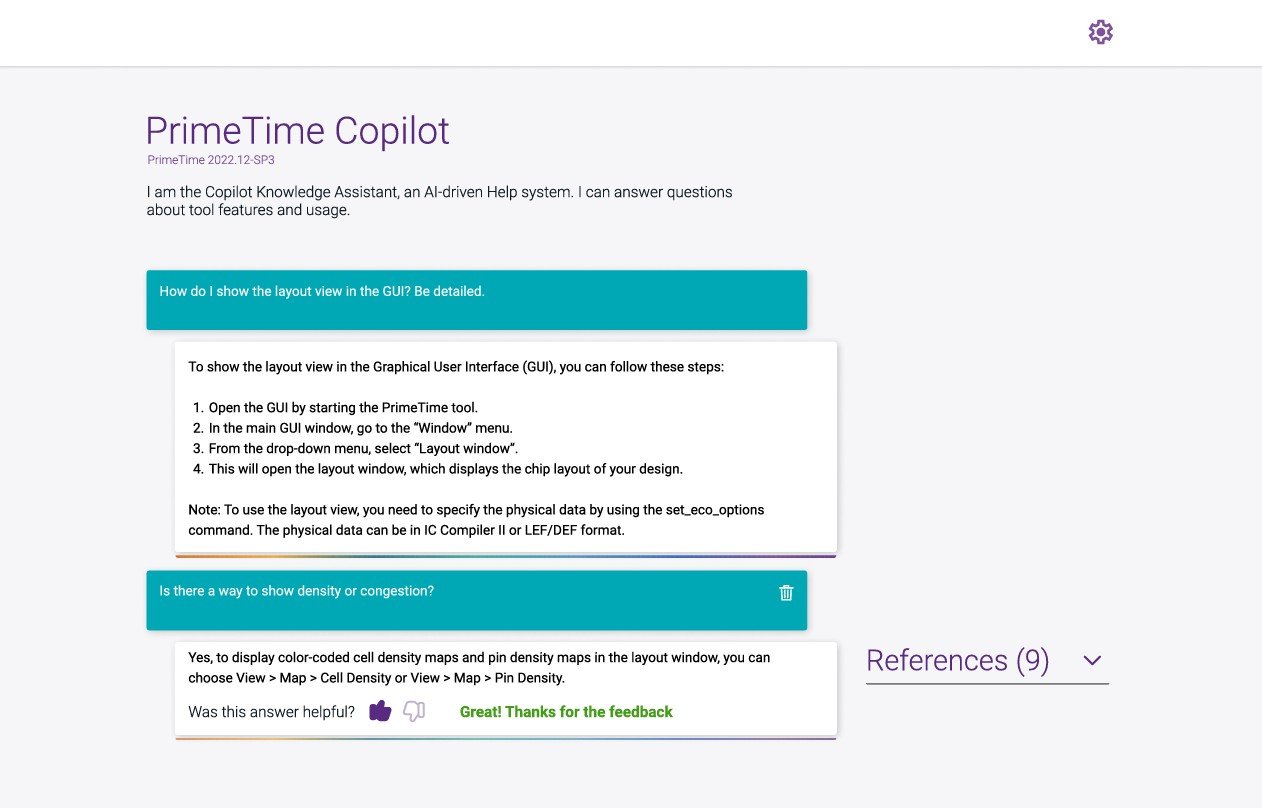

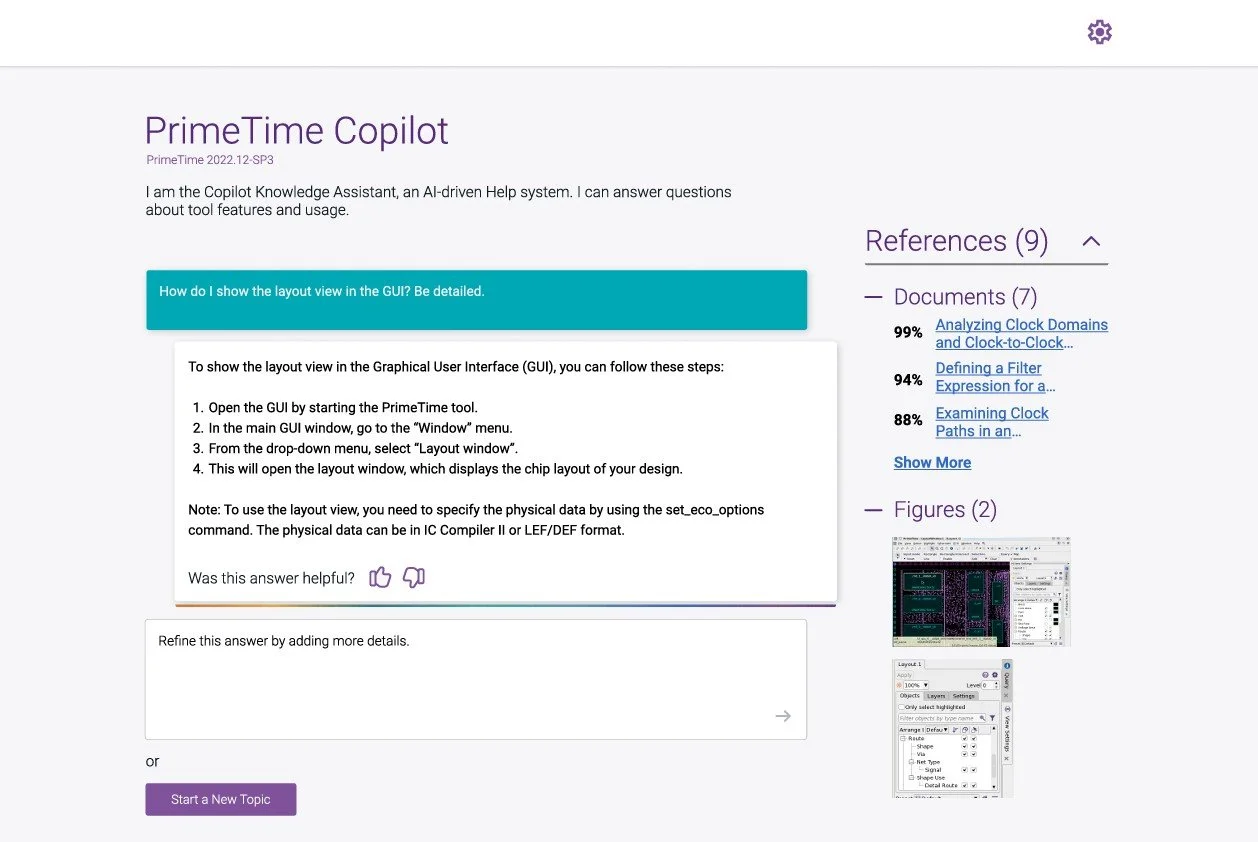

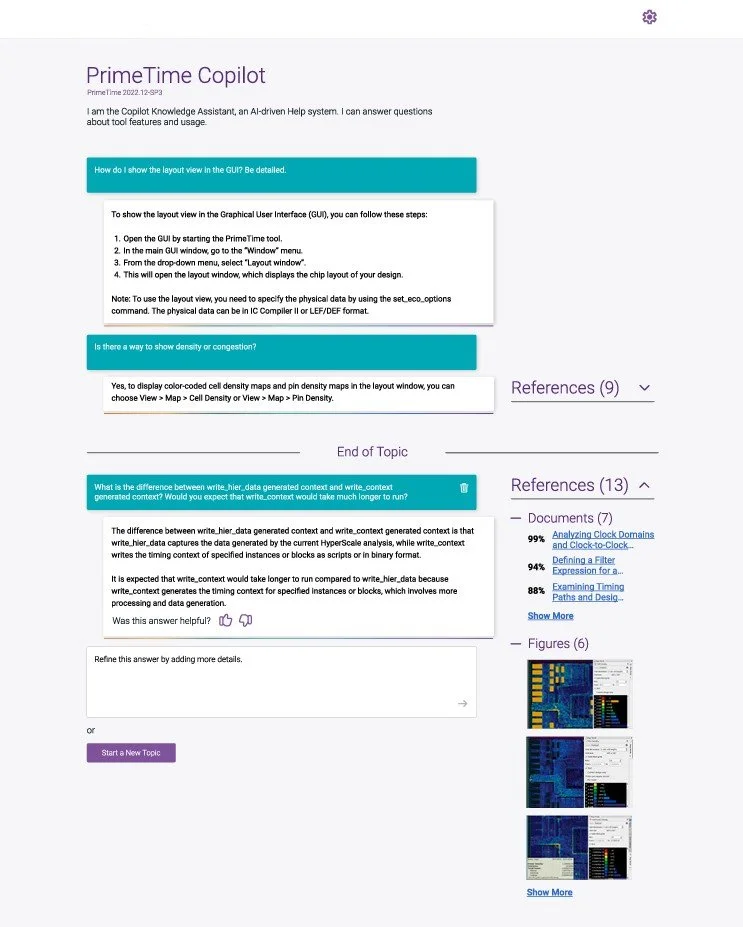

The final experience introduced a guided input field with contextual prompts that helped users describe their issue with greater specificity upfront. The guidance panel surfaced when needed and was out of the way when not. References, figures, and related documents were made available alongside answers, allowing users to go deeper without escalating to a human.

Impact

The redesigned experience reduced helpdesk volume for routine queries, freeing SME time for complex problem solving by improving self-service success, increased resolution speed for common issues, and improved the overall quality of user-submitted requests reducing dependency on live support agents for questions the system could answer itself.

Reflection

The biggest lesson was that guidance beats features. My instinct early on was to add more prompts, more structure, more options. What actually worked was restraint: one well-placed coaching panel that appeared contextually without interrupting the flow. Small surface, significant operational impact.

This project also reinforced something I carry into every engagement: when you can't access end users directly, your research has to work twice as hard. The SME interviews weren't just a workaround, they became the foundation of everything.

Next Steps

The next meaningful iteration would be personalizing guidance based on user type a Fresher needs different prompts than a Guru. The groundwork is already there in the persona research; it just needs to be built into the experience layer.